A Developer's Compatibility Checklist

OpenAI Compatible APIs: 4 Gaps Most LLM Gateways Miss

Date

Author

Andrew Zheng

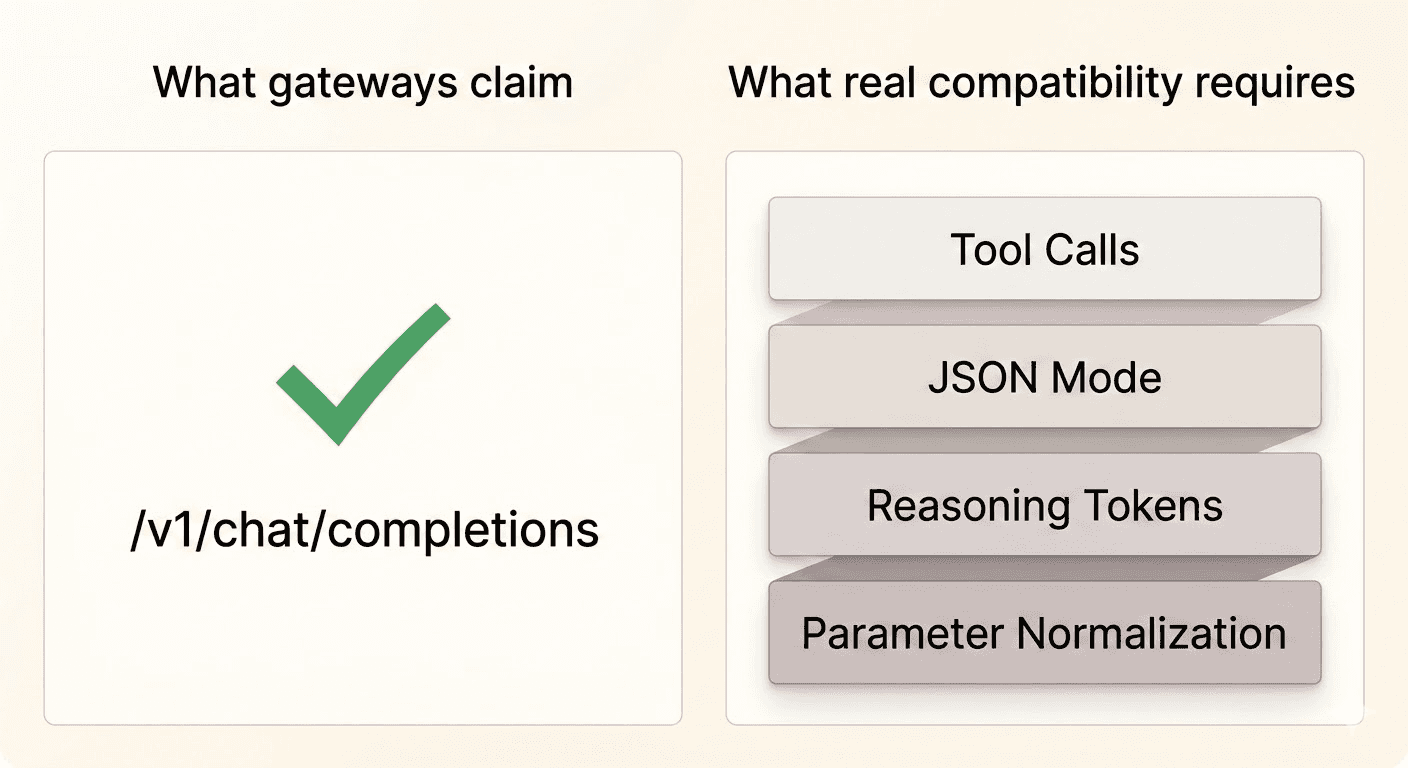

Every LLM gateway on the market claims to be "openai-compatible." It's the first thing listed on their feature page, usually next to a green checkmark.

What they mean is: we support /v1/chat/completions. Which is a bit like a restaurant claiming they "serve food."

The real test of API compatibility only shows up when you try to do something beyond the basics. Switch models mid-product, run Tool Calls through a non-OpenAI model, or pass structured output requirements to a provider that doesn't natively support them. That's when the green checkmark stops meaning anything.

This blog explores what true API compatibility actually involves and why achieving it correctly is more challenging than it appears.

Why OpenAI API Compatibility Is Harder Than It Looks

When OpenAI launched the ChatGPT API, they defined a schema that became the de facto industry standard. Every provider since has had to make a choice: adopt OpenAI's schema, build their own, or do some hybrid.

Most chose hybrid. Which means today's LLM landscape looks like this:

OpenAI has

functions, returnsfunction_callobjectsClaude has

tools, returnstool_useblocks with different field namesGemini has

function_declarations, returnsfunction_callparts with yet another structureOpen-source models like Llama 3 often have no native Tool Call support at all

This isn't anyone's fault. These APIs evolved independently, with different design philosophies and timelines. But the result for anyone building on top of multiple models is a maintenance nightmare unless something in the middle normalizes it all.

That "something in the middle" is what a gateway's compatibility layer is supposed to do. Most don't do it well.

Where OpenAI Compatible APIs Actually Break

1. Tool Call Normalization

Tool Calls — the ability for a model to request that your application run a function and return the result — are arguably the most important capability in production AI systems. Agents, structured pipelines, and anything with external data retrieval depends on them.

The schema differences between providers are not cosmetic. They're structural. The field names differ, the nesting differs, and the way parallel tool calls are expressed differs. If your gateway just does request forwarding, a Tool Call formatted for OpenAI will either fail silently or throw an error on Claude, and vice versa.

The situation gets worse with open-source models. Llama 3 and similar models don't have a native tool-calling mechanism. They can follow instructions in natural language — which means the only way to get consistent tool-use behavior is to inject a ReAct-style prompt that simulates function calling through text, then parse the output.

How Infron handles it: Infron maintains an internal canonical format for Tool Calls. On the way in, requests are converted from OpenAI schema to the target model's native format. On the way out, responses are converted back to OpenAI schema before reaching your application. For models without native support, Infron automatically injects a ReAct prompt template and parses the structured output — the calling application sees a standard function_call response and has no idea what happened underneath. The conversion overhead is kept under 1ms through pre-compiled templates.

2. JSON Mode

Structured output — getting a model to return valid JSON rather than prose — is one of the most common production requirements. Data extraction, classification, API response generation: all of these need reliable JSON output.

OpenAI's approach is clean: pass response_format: { type: "json_object" } and the model guarantees valid JSON. Simple.

Other providers handle this differently. Claude doesn't have a native JSON mode — to get JSON output, you need to include the instruction in the system prompt. Some open-source models will attempt JSON when instructed but don't guarantee validity, meaning you can get malformed output that breaks a downstream JSON parser.

The practical consequence: if your gateway only passes response_format through to the provider, it silently fails when you switch to Claude or a model that doesn't support it. Your application crashes on a JSON parse error, and the root cause isn't obvious.

How Infron handles it: When a request includes response_format: { type: "json_object" }, Infron checks whether the target model supports it natively. If yes, the parameter is passed through as-is. If not, Infron injects a system prompt instruction requiring JSON output, then validates the response before returning it to the caller. If the model returns malformed JSON, Infron attempts repair before passing it through — and if repair fails, it returns a structured error rather than broken JSON.

3. Reasoning Token Passthrough

The o1 and o3 model families introduced a new concept: reasoning tokens. The model "thinks" before responding, and those thinking tokens are counted separately in usage — at a higher per-token price than standard output tokens.

For most gateways, this is a billing problem waiting to happen. If usage.reasoning_tokens isn't correctly surfaced in the response, your application's cost tracking will be wrong. You'll see lower-than-expected token counts and then get a billing surprise at month-end.

The reverse problem also exists: for models that don't generate reasoning tokens, the field should either return 0 or be omitted entirely. If a gateway returns a non-zero value for a model that doesn't support reasoning, your cost attribution is garbage in a different direction.

How Infron handles it: For OpenAI o1/o3 series, Infron ensures usage.reasoning_tokens is correctly extracted from the provider response and passed through to the caller. For all other models, the field is returned as 0 or omitted according to your SDK's expectations — maintaining backward compatibility without breaking clients that check for the field's existence.

Beyond billing, Infron also normalizes reasoning control parameters. Whether a model calls it reasoning_effort, thinking, or a budget-based token parameter, Infron maps your single standard parameter to whatever the target model expects.

4. Hyperparameter Semantic Drift

The parameters that look most consistent across providers are actually some of the most inconsistent in practice.

temperature is the canonical example. Most models accept values from 0 to 2. Gemini accepts 0 to 1. Pass temperature: 1.5 to Gemini through a gateway that doesn't handle this, and you'll get an error — or worse, silent clamping that changes your model's behavior without any indication.

max_tokens has a meaning problem. For OpenAI, it refers to the maximum output tokens. For Claude, it historically referred to the total token budget (input + output combined). Pass a max_tokens value appropriate for output-only semantics to a Claude model through a naive gateway, and you might get responses cut off far earlier than expected.

top_p and temperature together: many models recommend using one or the other, not both. Some models will error if both are present. A gateway that doesn't handle this will intermittently fail depending on which model it's routing to.

How Infron handles it: Infron maintains a per-model parameter mapping table that handles normalization at the edges. temperature values are rescaled to the target model's accepted range. max_tokens semantics are correctly interpreted for each provider. Conflicting parameters are resolved according to documented priority rules — and the behavior is consistent and predictable, regardless of which model you're routing to.

Why Maintaining OpenAI API Compatibility Is an Ongoing Engineering Problem

Getting all of the above right at a point in time is hard. Keeping it right over time is harder.

OpenAI ships API updates frequently. Claude's tool-calling schema has changed across major versions. New models launch with new parameter names and new edge cases. An open-source model that needed ReAct prompting six months ago might have native Tool Call support today.

This isn't a one-time engineering project. It's ongoing maintenance — tracking provider changelogs, testing new model releases against the full compatibility matrix, updating normalization logic, and catching regressions before they reach production.

Infron has a dedicated team that owns this compatibility layer. When a provider ships an API change, Infron's normalization is updated and tested before that change propagates to production traffic. The goal is that switching models or having a provider silently update their API should never require a code change on the caller's side.

How to Test Any LLM Gateway for Real

"OpenAI-compatible" claims are easy to make. Here's a short checklist for verifying them:

Tool Calls: Write a test that sends a Tool Call request using OpenAI schema to your three most-used non-OpenAI models. Check that the response comes back in standard format and that parallel tool calls are handled correctly.

JSON Mode: Send response_format: { type: "json_object" } to a Claude model and an open-source model. Check that you get valid JSON back, not an error or malformed output.

Parameter edge cases: Send temperature: 1.8 and max_tokens: 500 to a Gemini model. Check that the request succeeds and the output length is roughly what you'd expect.

Cross-model consistency: Run the same prompt through GPT-4, Claude Sonnet, and a Llama-family model. The responses will differ — but if the format of the response differs in ways your application can't handle, that's a gateway problem, not a model problem.

If your gateway passes all four of these, the compatibility layer is probably real. If it fails on any of them, you've found the gap that will bite you in production.

What Breaks When OpenAI Compatible API Support Is Shallow

The damage from shallow compatibility isn't always immediate. It tends to accumulate.

An application that works fine with GPT-4 will break in subtle ways when you try to route 20% of traffic to Claude for cost testing. Tool Calls start failing for a subset of users. JSON parsing errors show up in logs at low enough frequency that they look like flakiness rather than a systematic issue. Your team spends a week debugging something that turns out to be a parameter normalization gap.

The engineering time spent on that debugging is real. So is the cost of not running cost-optimization experiments because switching models feels too risky.

Real API compatibility isn't a checkbox. It's what makes multi-model infrastructure actually usable — which is the entire point of having a gateway in the first place.

Infron's compatibility layer covers Tool Call normalization, JSON Mode adaptation, Reasoning Token passthrough, and hyperparameter mapping across 500+ models and 100+ providers. If you want to test it against your own use cases, you can sign up at infron.ai and run the checks above with your actual workload.