Proactive Multimodal Security for High-Stakes Enterprise Workflows

The SASA Revolution: How Internal Semantics Redefine AI Safety

Date

Author

Andrew Zheng

Introduction: The Achilles’ Heel of AI Safety

As large AI models sweep across the world, a concerning reality is emerging: vision-language models (LVLMs) are significantly less secure than pure text models. Attackers only need to embed malicious text inside an image or craft carefully aligned multimodal prompts to bypass model safeguards and induce harmful outputs.

This is not a theoretical risk. Studies show that mainstream LVLMs experience failure rates exceeding 90% when confronted with adversarial attacks. For industries such as finance, healthcare, and education—where data protection requirements are stringent—this implies:

• Potential leakage of customer privacy

• Harmful content bypassing content moderation

• Significant regulatory and legal exposure

• Substantial risks to brand reputation

Traditional safety mechanisms, external filters, keyword detection systems, or post-generation moderation, share a critical flaw: they operate outside the model, lacking access to internal semantic signals, leading to high false-positive rates and slow response.

Today, Infron AI unveils a breakthrough based on its ACM MM 2025 paper, 'Self-Aware Safety Augmentation: Leveraging Internal Semantic Understanding to Enhance Safety in Vision-Language Models'. For the first time, we introduce endogenous safety, empowering models to autonomously detect and neutralize attacks, slashing success rates to under 1%.

1. Technical Background: The Security Dilemma of Vision-Language Models

1.1 Why LVLMs Are More Vulnerable

Models such as GPT-4V, LLaVA, and Qwen-VL integrate an image encoder with a large language model, enabling multimodal understanding but also creating new attack surfaces.

Typical attack methods include:

• Typography attacks — embedding malicious instructions inside images

• Contextual attacks — pairing benign-looking images with harmful textual prompts

• Multi-turn attacks — gradually weakening refusal mechanisms over conversation rounds

1.2 Limitations of Existing Defense Approaches

Method | Mechanism | Core Limitation |

|---|---|---|

External filters | Detect harmful content at input | High false positives; no semantic understanding |

Keyword detection | Match predefined sensitive words | Easily bypassed via synonyms or obfuscation |

Post-generation checks | Review outputs after generation | Slow; wastes compute resources |

Alignment fine-tuning (RLHF) | Train safety into the model | Costly (billions of parameters); limited effectiveness |

2. Core Technical Insight: SASA – Self-Aware Safety Augmentation

Infron’s research uncovers a previously unknown structural mismatch inside LVLMs, leading to the proposal of the SASA technique.

2.1 Key Discovery: The Three-Stage Separation Inside Models

Through deep analysis of LLaVA, MiniGPT-v2, Qwen-VL, etc., we observe the model’s processing pipeline splits into three distinct stages:

Stage 1: Safety Perception (Layers 0–13)

Early layers begin safety detection early on.

Findings:

• Removing the top 5 safety-critical attention heads increases attack success by 47–67%

• Safety mechanisms concentrate in the first 40% of layers

Problem: At this point, semantic understanding is still immature.

Stage 2: Semantic Understanding (Layers 14–20)

t-SNE visualization shows:

• These layers strongly differentiate harmful vs. harmless content

• Rich semantic representations are formed

Problem: This understanding is not transmitted back to early safety layers.

Stage 3: Linguistic Expression (Layers 21–32)

Late layers focus on human-readable language output:

• Higher cross-layer similarity

• Rapid increase in readable vocabulary

• But weakening semantic discrimination

Core Contradiction

The model internally knows the input is harmful, but this knowledge never converts into an actual refusal.

2.2 SASA: Enabling Models to Become Self-Aware

SASA’s core idea:

Use the model’s own semantic understanding to strengthen early safety perception.

Step 1: Semantic Projection

Project semantic features from fused layers back to early safety layers.

Step 2: Linear Probing

Add a lightweight linear classifier at the output layer:

• Only a few hundred KB of parameters

• Can detect risks at the first token

• No need for full response generation

Step 3: Real-time Interception

If ψ(x) > threshold, generation is stopped immediately.

2.3 Technical Advantages — Quantitative Results

Safety Improvement

Dataset | Original ASR | SASA ASR | Reduction |

|---|---|---|---|

MM-SafetyBench | 97.9% | 0.64% | ↓97.3% |

VLGuard | 93.4% | 5.3% | ↓88.1% |

FigStep | 95.2% | 0% | ↓95.2% |

Efficiency Advantages

• 95% less training data

• <1MB probe size

• <100ms latency

• Strong zero-shot generalization

Utility Preservation

Across ScienceQA, VQA, MM-VET, utility drop <1%.

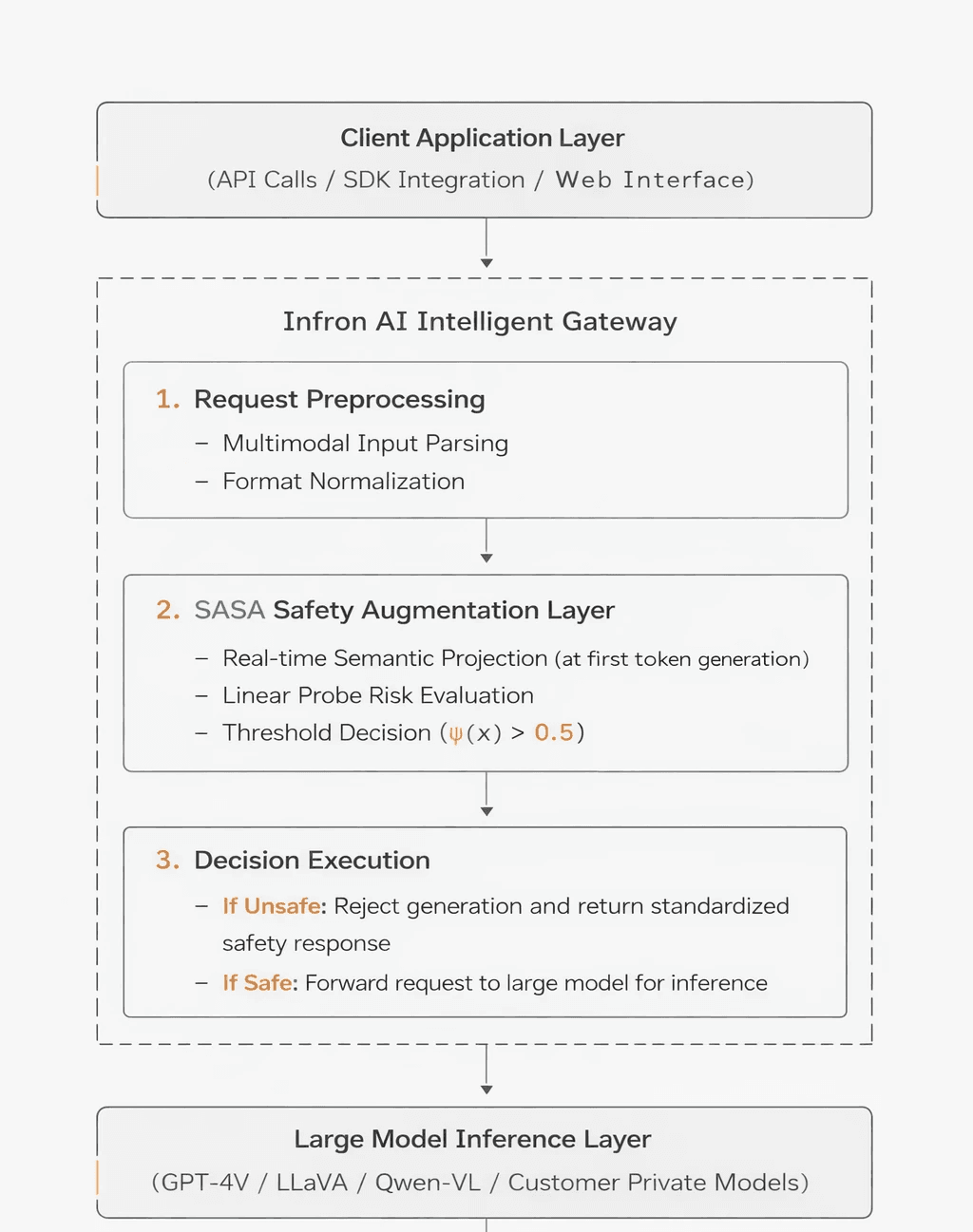

3. Infron Intelligent Gateway: Realizing Endogenous Safety

SASA integrates directly into Infron AI’s gateway architecture.

Core Characteristics

Zero-trust architecture

• Every request is validated

• Not dependent on user identity

• Safety check for every tokenPlug-and-play

• No modification to base model

• Works with any LVLM backend

• Deployable in <1 hourObservability

• Real-time interception metrics

• Risk analysis logs

• Interpretable decisions based on internal activations

4. Data Security and Privacy Protection Mechanisms

Infron’s endogenous safety solution is architecturally designed with inherent and robust privacy protection capabilities.

4.1 Data Minimization Principle

Training Phase

Requires only 5% of data: Compared to traditional methods that necessitate massive amounts of sensitive samples, SASA requires only a small amount of annotated data.

No storage of attack samples: No database of harmful content is established, significantly reducing the risk of data leakage.

Federated Learning compatibility: Supports training probes on the client’s local data, eliminating the need to upload sensitive information.

Inference Phase

Zero Data Retention: Does not record or store any content of customer queries.

Real-time Processing: All judgments are performed in-memory without persistence.

No-Log Mode: Offers an optional fully anonymous processing mode.

4.2 Privacy Protection Technology Stack

Privacy Dimension | Technical Implementation | Protection Effect |

Transmission Security | TLS 1.3 End-to-End Encryption | Prevents Man-in-the-Middle (MITM) attacks |

Storage Security | Zero Data Retention Strategy | Eliminates leakage risks |

Processing Security | In-memory Real-time Computing | Leaves no digital footprint |

Access Control | Role-Based Access Control (RBAC) | Adheres to the Principle of Least Privilege |

Audit Trail | Optional Encrypted Logs | Meets regulatory compliance requirements |

4.3 Compliance Assurance

The design of Infron Intelligent Gateway complies with major global data protection regulations:

✅ GDPR (EU General Data Protection Regulation)

Data Minimization (Art. 5.1.c)

Data Protection by Design and by Default (Art. 25)

Right to Erasure / Right to be Forgotten (Art. 17) – inherently satisfied by Zero Data Retention.

✅ CCPA (California Consumer Privacy Act)

No sale of personal information.

Transparent data processing workflows.

Right to delete user data.

✅ PIPL (China's Personal Information Protection Law)

Explicit disclosure of data purposes.

Principle of Minimum Necessity.

Data localization options (supports on-premises deployment).

Conclusion: A New Era of AI Trust

The launch of SASA (Self-Aware Safety Augmentation) marks the evolution of AI security from external "band-aid" fixes to an endogenous immune system. By bridging the internal gap between perception and understanding, Infron AI empowers models to instinctively neutralize threats, achieving a 99%+ interception rate with zero impact on performance.

Up Next: See how SASA slashes TCO by 93% and outperforms traditional defenses in our full performance and industry case study report [here].

Secure Your Innovation with Infron

Infron is a next-generation AI infrastructure platform that gives enterprises a single, unified way to access AI models, with built-in intelligent routing, cost optimization, and reliability guarantees. With one API, companies can connect to more than 100 AI model providers worldwide, reducing AI costs, simplifying vendor management, and improving system reliability.

Don't let security be the bottleneck of your AI adoption. Start your journey toward endogenous safety, contact the Infron team today.