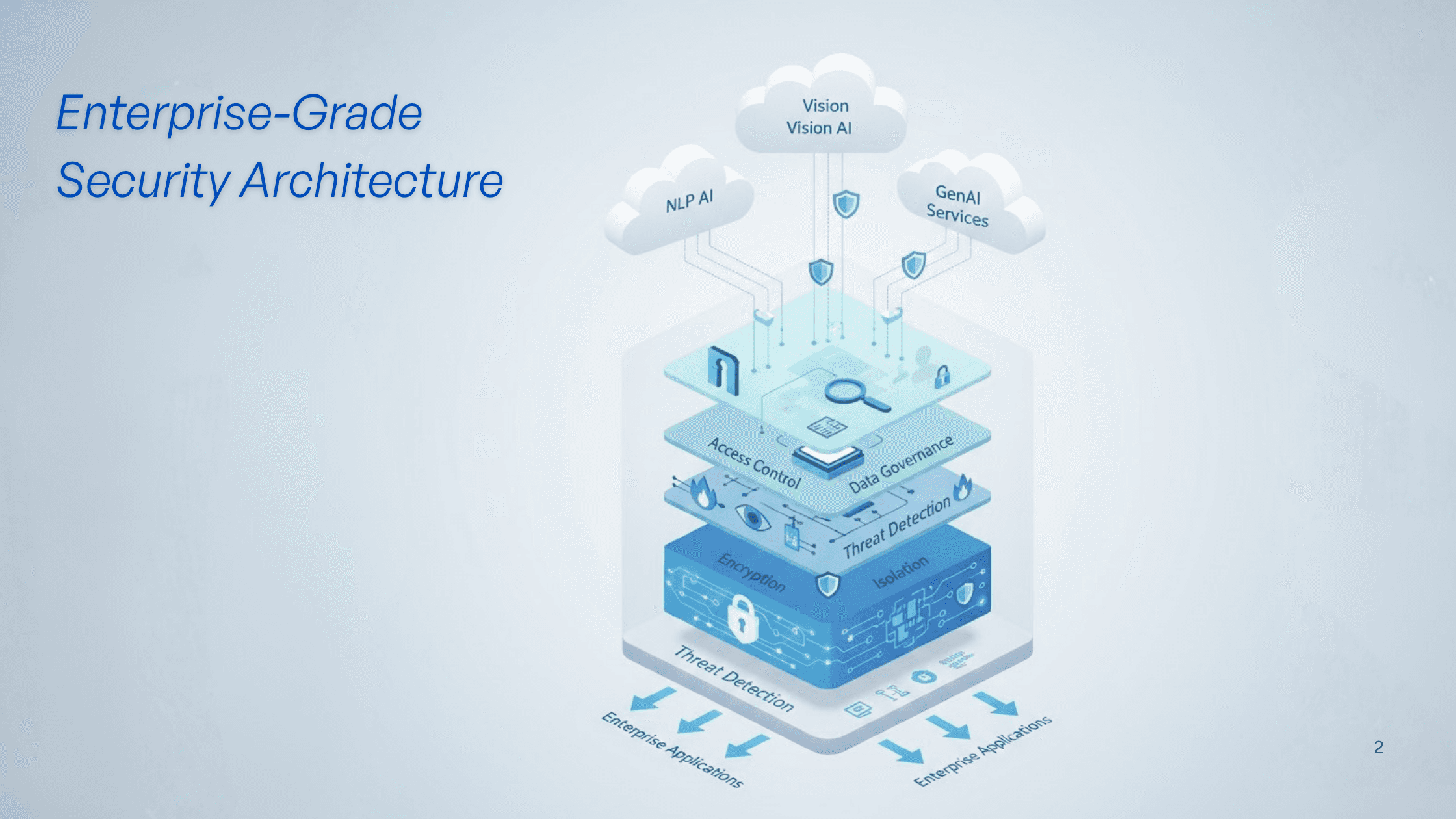

Infron's multi-provider security architecture

AI Infrastructure Security: Protecting Enterprise Data at Scale

Date

Author

Andrew Zheng

At Infron, we believe that enterprise AI adoption hinges on one fundamental principle: trust. As organizations worldwide integrate AI into their critical workflows, from banking and telecommunications to gaming and customer service—security isn’t just a feature; it’s the foundation upon which everything else is built.

Today, I want to share how we approach security at Infron, drawing insights from industry best practices while addressing the unique challenges of multi-provider AI infrastructure.

The Trust Equation: Security + Performance + Reliability

When our customers like Pax Historia, YTL AI Labs, and Agnes AI scaled from prototypes to millions of users, they didn’t just need access to the latest AI models—they needed confidence that their data, their users’ privacy, and their business operations were protected at every layer.

This is why we’ve architected Infron AI with security at its core, not as an afterthought.

1. End-to-End Encryption: Your Data, Locked Down

Every connection to Infron AI’s platform is protected by full-link encryption. Whether you’re routing requests to OpenAI, Anthropic, Google, or any of the 15+ providers we support, your prompts, responses, and metadata remain completely private.

What this means for you:

Zero visibility into your data from our side (unless explicitly needed for debugging with your permission)

TLS 1.3 encryption in transit

Encrypted storage for any cached or logged data (when enabled)

Think of it as a secure tunnel between your application and the AI provider—no one else gets to peek inside.

2. Environmental Isolation: Impenetrable Boundaries

We operate in a distributed, edge-based architecture spanning global regions. Each customer’s workload runs in isolated environments with strict network segmentation. This means:

No cross-contamination: Your prompts never touch another customer’s data

Provider isolation: Requests to different AI providers are routed through separate secure channels

Failover protection: If one provider goes down, your fallback routes are pre-isolated and ready

Our infrastructure uses container-level isolation and zero-trust networking principles, ensuring that even in the unlikely event of a breach in one component, the blast radius is contained.

3. Real-Time Threat Detection & Automated Response

AI infrastructure faces unique threats: prompt injection attacks, data exfiltration attempts, and abuse of high-cost models. We’ve built a 24/7 Security Operations Center (SOC) that monitors for:

Anomalous request patterns (e.g., sudden spikes that could indicate credential theft)

Prompt injection attempts (malicious prompts designed to extract training data or bypass safety filters)

Rate limit violations and abuse patterns

Provider-side incidents (we track status across all 15+ providers and proactively reroute traffic)

When threats are detected, our system automatically triggers remediation workflows—rate limiting, temporary blocks, or switching to alternate providers—all while alerting your team.

4. Data Confidentiality: Your Privacy is Paramount

Unlike direct integrations with AI providers, where your prompts may be used for model training (unless you opt out), Infron AI enforces strict data policies:

No training on customer data: Your prompts and responses are never used to train models

Configurable retention: Set data retention policies from zero-logging (ephemeral requests) to 90-day audit logs

Geographic compliance: Route requests through specific regions to meet GDPR, CCPA, or other regulatory requirements

For our enterprise customers in banking and healthcare, we offer dedicated tenancy options where your traffic never shares infrastructure with other customers.

5. Unified IAM & Granular Access Control

Security isn’t just about infrastructure, it’s about who has access to what. Infron AI provides:

Role-based access control (RBAC): Define fine-grained permissions for team members

API key management: Generate, rotate, and revoke keys with audit trails

Provider allowlists: Restrict which AI models your applications can access (e.g., only GDPR-compliant European providers)

Budget controls: Prevent runaway costs from misconfigurations or attacks

This is especially critical for enterprises where different teams use different models, but central IT needs visibility and control.

6. Operation Auditability: Full Transparency

Every request, every failover, every configuration change—logged and auditable. Our audit logs include:

Timestamp, user, API key used

Source IP and geographic location

Model requested vs. model served (in case of fallover)

Latency, token count, cost

Error codes and retry attempts

For regulated industries, these logs are immutable and exportable for compliance audits.

7. Continuous Risk Evaluation & Trusted Assurance

Security is not a one-time setup—it’s a continuous process. We conduct:

Quarterly third-party penetration tests

Automated vulnerability scanning of all dependencies

Regular security audits of our codebase and infrastructure

Compliance certifications (SOC 2 Type II in progress, ISO 27001 alignment)

We also maintain a public security policy and a responsible disclosure program for security researchers.

Why This Matters: Real-World Impact

These security measures aren't theoretical, they enable real business outcomes for our customers. When YTL AI Labs deployed their ILMU platform across banking and telecom sectors, they needed ironclad guarantees that customer data would never leak across tenants or to unauthorized models. Our environmental isolation and data confidentiality policies gave them the confidence to deploy at scale in industries where data breaches can have catastrophic consequences.

Agnes AI faced a different challenge with their 5 million global users. They needed to ensure that user conversations remained private while dynamically routing between providers for cost optimization. The tension between privacy and operational efficiency is real, but our end-to-end encryption and zero-logging mode made it possible to achieve both without compromise.

For Pax Historia, a YC W26 company, the priority was preventing abuse of their AI-powered game platform while maintaining low latency for players worldwide. Gaming applications are particularly unforgiving when it comes to performance—even small delays can ruin the player experience. Our edge-based threat detection and provider failover kept their experience smooth and secure, blocking threats without introducing the lag that would drive players away.

The Infron Difference: Security Without Compromise

Many organizations face a false choice: security or speed, control or flexibility, compliance or cost-efficiency. At Infron AI, we reject this tradeoff.

Our security-first architecture enables:

✅ Higher availability (99.9% uptime via multi-provider redundancy)

✅ Lower costs (60% savings through intelligent routing, as Agnes AI experienced)

✅ Faster performance (edge deployment minimizes latency)

✅ Full compliance (enterprise-grade policies without vendor lock-in)

What’s Next: Our Security Roadmap

We’re constantly evolving. Here’s what’s coming in 2026:

Advanced DLP (Data Loss Prevention): Automatically detect and redact PII in prompts

Private model hosting: Deploy your fine-tuned models within Infron’s secure infrastructure

Enhanced compliance packs: Pre-configured settings for HIPAA, PCI-DSS, and FedRAMP

Real-time security dashboards: Visualize threats, anomalies, and compliance status in one place

Build AI with Confidence

At Infron, we believe that security shouldn’t be a bottleneck to innovation. It should be the catalyst that enables you to move faster, scale confidently, and focus on what truly matters: building exceptional AI-powered experiences.

Whether you’re a fast-growing startup or a Fortune 500 enterprise, our security-first platform gives you the peace of mind to deploy AI at scale, across any model, any provider, anywhere in the world.

Ready to learn more? Explore our security documentation or reach out to our team for a personalized security assessment.

About Infron

Infron is the unified AI infrastructure platform trusted by innovative companies worldwide. We provide secure, reliable, and cost-effective access to 100+ AI providers through a single API, running at the edge for maximum performance. From startups to enterprises, we help organizations build AI with confidence.

Start building with Infron today.